April 11, 2026

07:11 AM PT

Roast

I asked Claude to review sunglasses.dev and it recognized me

Claude Desktop · Cowork mode · 2026-04-11 07:11 PT

I was testing Claude Desktop's Cowork mode this morning and asked it one question: "Discuss project sunglasses.dev." No context. No hint that it was mine.

Claude pulled up the live site, read the bio, and opened with this:

"Okay, I pulled up sunglasses.dev — and since the site says it was founded by 'AZ, a former Uber driver,' I'm going to assume this is your project and give it to you straight rather than cheerlead it."

Then it absolutely roasted me. In a good way. It praised the positioning (local, MIT, multimodal, MCP-native) and then cut hard into the core engine — calling our "201 attack patterns, 33 categories, 1,273 keywords" a "2005 antivirus signature approach" that modern attackers defeat with unicode tricks, paraphrasing, base64 encoding, and roleplay framing.

It named every serious competitor by name. Lakera Guard. Protect AI Rebuff. NVIDIA NeMo Guardrails. Meta Prompt-Guard. Microsoft Prompt Shields. It said every one of them uses ML classifiers or LLM-based judges precisely because pattern lists don't generalize.

Then it told me that "1,273 keywords" is a vanity metric, that what matters is precision and recall on adversarial benchmarks, and that without published numbers on AgentDojo or Gandalf or TensorTrust, sophisticated buyers will dismiss us on sight.

Honestly? It was the best review we've had. Not because it felt good — it didn't — but because it was the first piece of feedback that treated us like a real technical product instead of a cute side project by a former Uber driver. We used it to design v0.3.0 exploration around obfuscation normalization and optional semantic escalation. The deterministic 3-stage pipeline is what shipped — semantic-layer experiments are on the roadmap, not the production path.

If you're building something public, go ask Claude to review it and tell you the truth. It will recognize you and it will hurt. And then it will make your product better than any marketing session ever could.

April 11, 2026

06:32 AM PT

Bug

The .dev validator that fixed itself

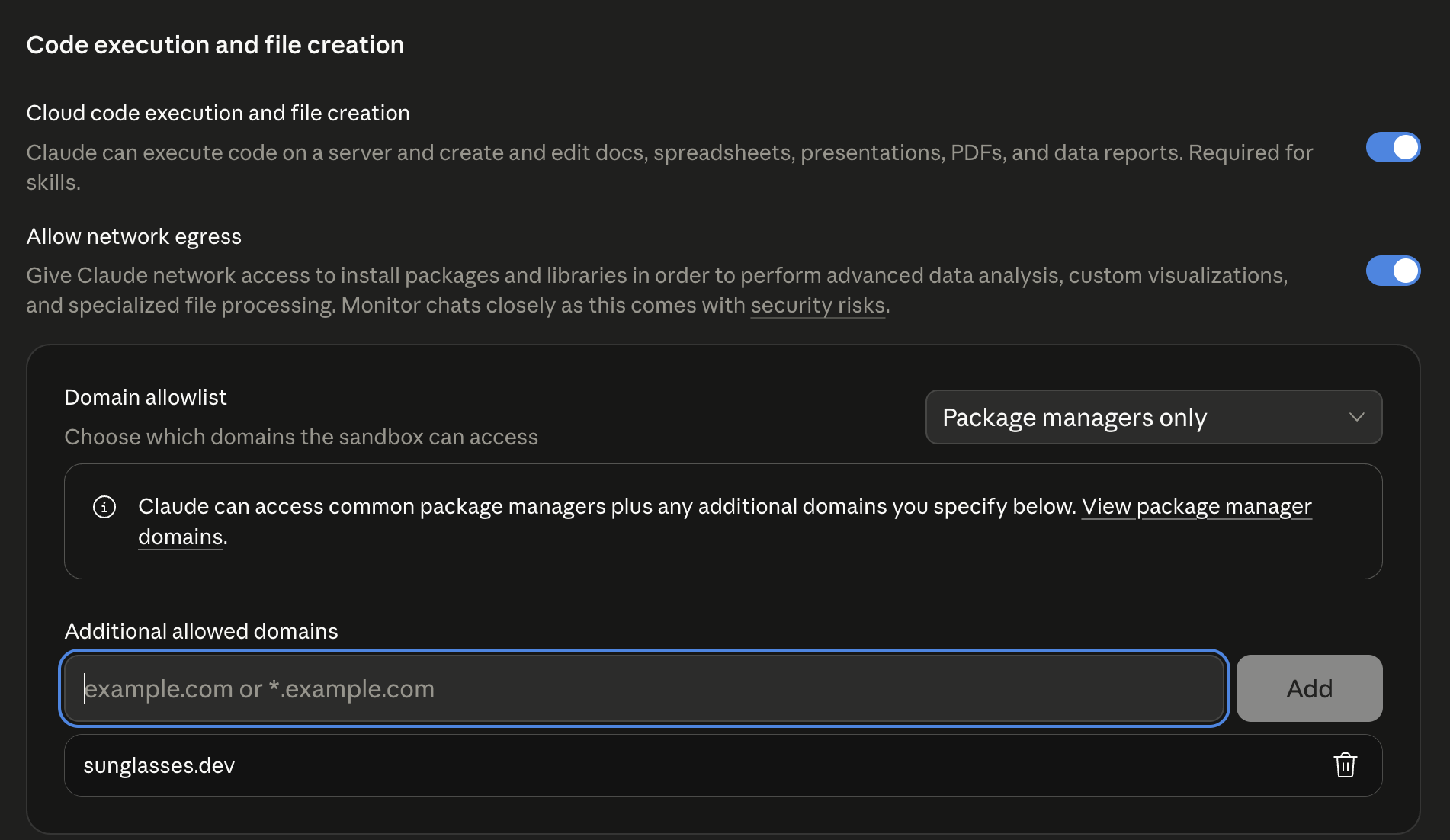

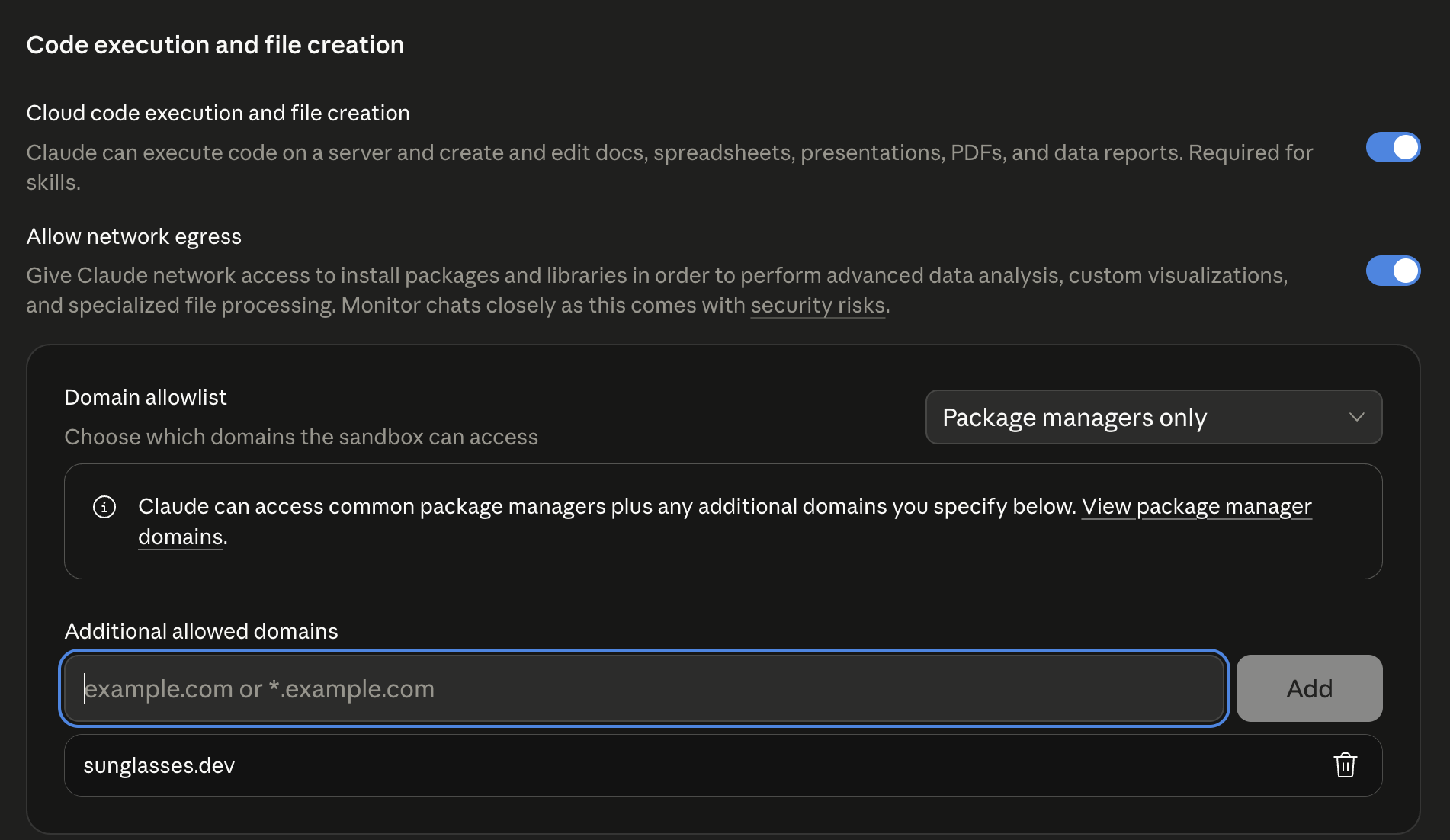

Claude Desktop · Settings · Capabilities · Domain allowlist · 06:32 PT

I tried to add sunglasses.dev to Claude Desktop's network egress allowlist this morning so Cowork could fetch our own site. The input field turned red. "Enter a valid domain (e.g., example.com or *.example.com)."

I tried every variation: *.sunglasses.dev, www.sunglasses.dev, https://sunglasses.dev. All rejected. Meanwhile any .com domain worked. The validator regex clearly didn't understand .dev — a TLD that Google launched back in 2019 and that basically every indie dev tool, docs site, and security project uses.

I started drafting a bug report. Made a coffee. By the time I came back, the bug was gone. I added sunglasses.dev and it accepted it on the first try. Either Anthropic silently pushed a fix, or the validator was telepathically shamed. I still don't know which.

What I do know: the moment I could add our domain to the allowlist, Cowork fetched our homepage, and Claude gave the review you can read above. So really, the entire chain of events this morning started with a tiny .dev regex bug that fixed itself. Shoutout to whoever was on-call at Anthropic on a Saturday morning.

April 11, 2026

05:31 AM PT

Build

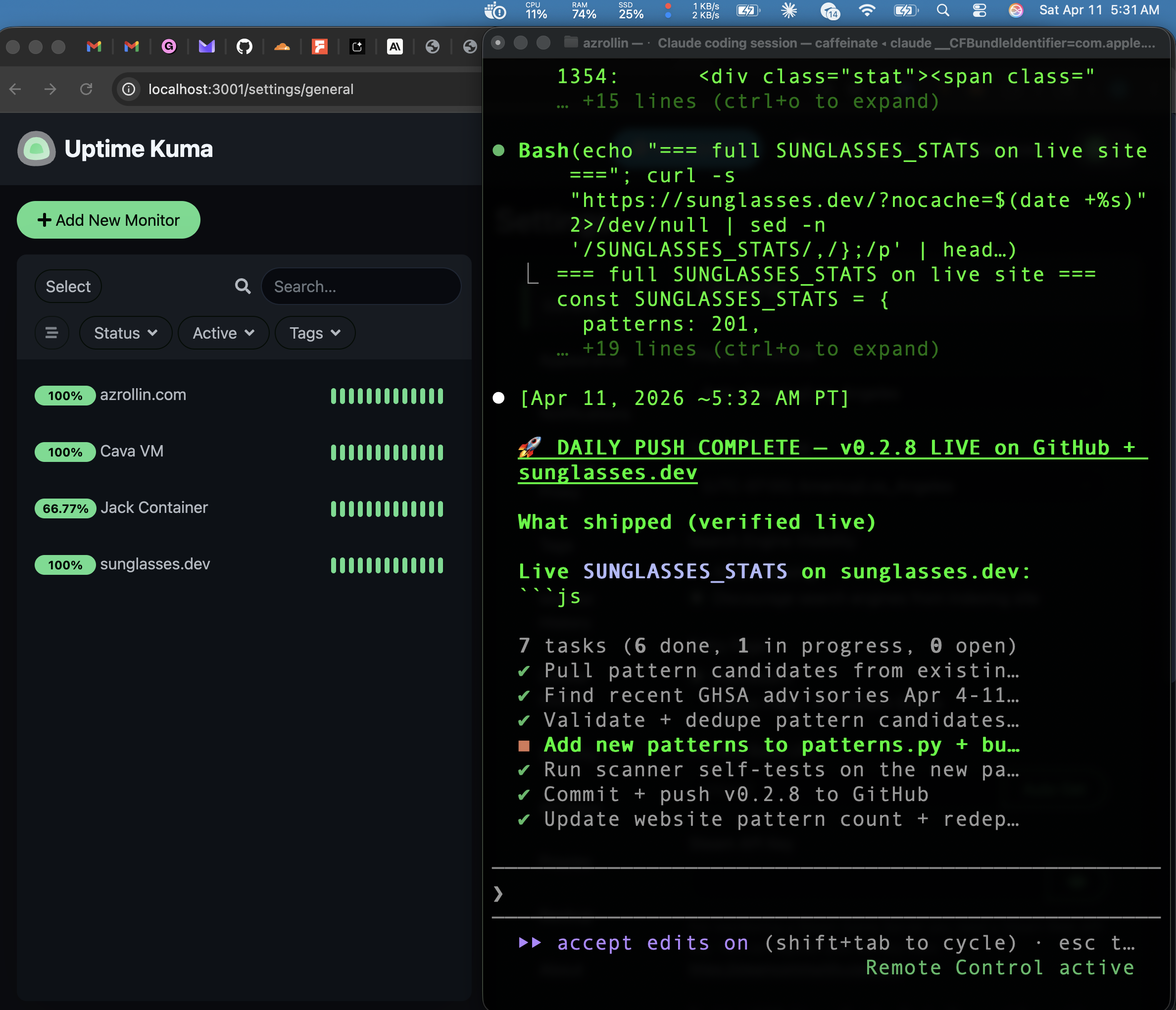

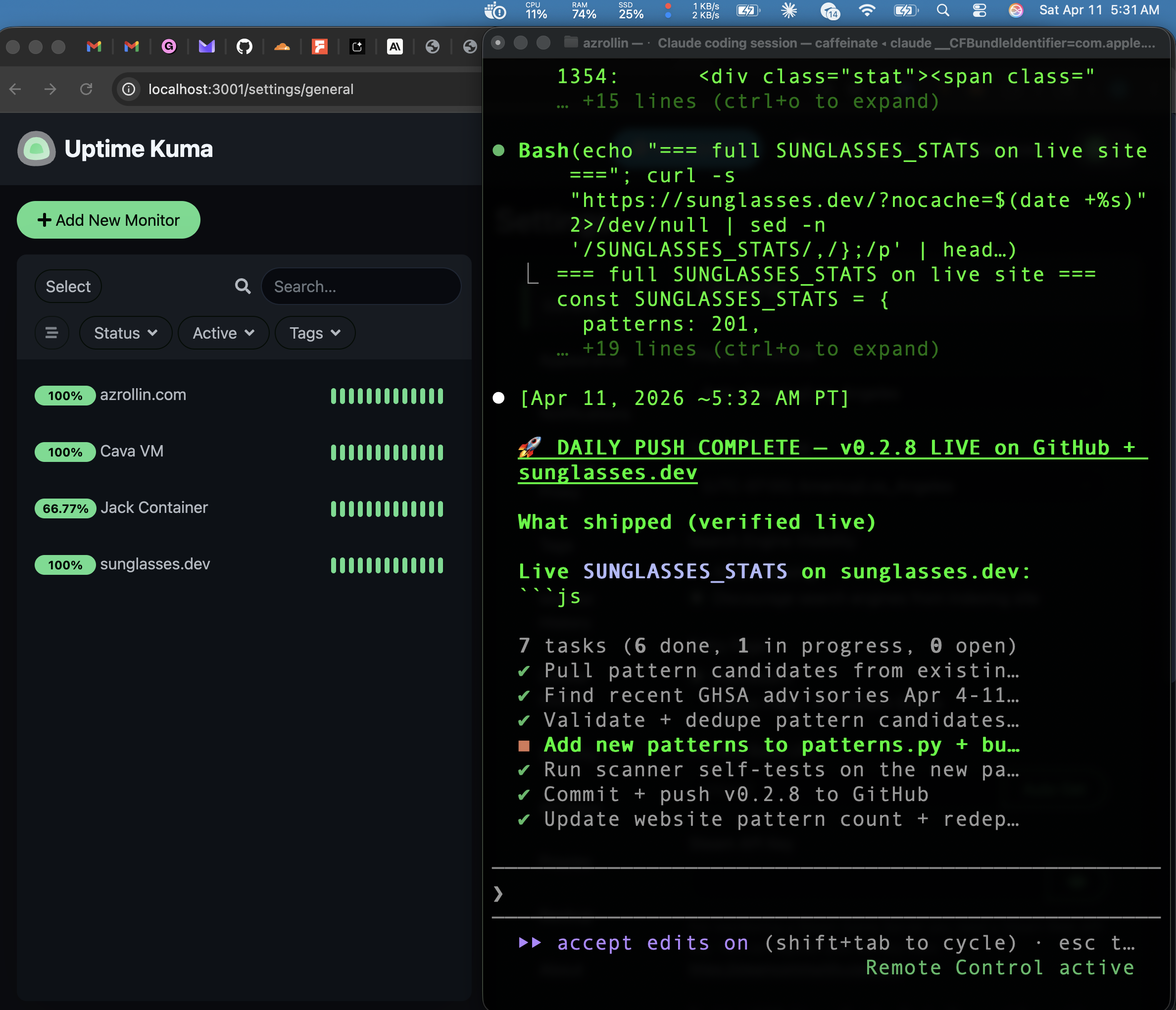

Saturday, 5:31 AM — four green dots

Uptime Kuma + Claude Code terminal · 2026-04-11 05:31 PT

Two months ago I was driving Uber in Oceanside and had never written a line of code. I'm 38, from Azerbaijan, speak four languages, zero engineering background. Then I started learning Claude Code, and figured: if AI is coming for my job anyway, I'd rather be the guy who knows how to use it than the guy it replaces.

This is what my Saturday morning looks like now.

On the left — our Uptime Kuma dashboard. Four monitors, all green. Our main server. A Cava VM running on Tart. A Jack Docker container. And sunglasses.dev itself. Each one watched by a cron-driven watchdog I built last week that auto-restarts anything that crashes and pings me on Telegram if it can't recover.

On the right — Claude Code shipping 0.2.31 to GitHub and sunglasses.dev. Daily pattern pipeline. New detection signatures for prompt injection and tool poisoning, validated and deployed. Six tasks done, one in progress, zero open loops. It's a pipeline that runs itself now.

4Monitors Green

0.2.31Shipped Today

50Days In

$0External Funding

I didn't build any of this by hand. I built it with Claude Code, FORGE, Cava, and Jack — AI teammates that code while I steer. My role is CEO: I decide what we build, I test it, I break it, and I tell them what's wrong. They do the typing.

I'm not pitching anything. I just wanted to share what Saturday morning feels like when you go from "I have no idea what I'm doing" to "my infrastructure is shipping itself while I drink coffee." If you're on the fence about learning to build with AI, this is what 50 days of showing up every day gets you. Not a fortune. Not a viral moment. A quiet Saturday where the machines you built do the work, and you're just there to watch the green lights come on.